Lately I’ve been following a discussion that worries me quite a bit: to what extent are we delegating our thinking to AI. It’s not an abstract or philosophical question, it’s something very real I’m seeing day to day in our profession and in society in general.

Recently I read an article by Erik Johannes Husom titled “Outsourcing thinking” that, among other things, discusses the concept of “lump of cognition fallacy”. The idea is that, just as there’s an economic fallacy saying there’s a fixed amount of work to do, some believe there’s a fixed amount of thinking to do, and if machines think for us, we’ll just think about other things.

But I believe the problem is much more complicated than that.

The Typical Criticism of LLMs

The most common criticism of Large Language Models (LLMs) is that they can deprive us of cognitive skills. The typical argument is that delegating certain tasks can cause a kind of mental atrophy. And honestly, the idea of “use it or lose it” seems intuitively and empirically correct to me.

What matters most is not whether this is true or not, but which types of use are more problematic than others.

The Developer Problem: Microsoft Admits It

What worries me most is that even Microsoft has internally recognized that tools like Copilot and ChatGPT are affecting critical thinking at work. Their personnel using these technologies experience “long-term dependency” problems.

Several studies and articles are pointing to the same:

- Junior developers who struggle without AI assistance

- Developers who become dependent on AI for basic problem-solving

- Risk of “cognitive decline” in software engineering skills

When Should We Avoid Using LLMs?

Andy Masley, in his article “The lump of cognition fallacy”, lists cases where it’s clearly harmful to outsource your cognition:

It’s bad to outsource your cognition when:

- It builds complex tacit knowledge you’ll need to navigate the world in the future

- It’s an expression of care and presence for someone else

- It’s a valuable experience in itself

- It’s deceptive to fake it

- It focuses on a critical problem where you don’t fully trust who you’re outsourcing to

Personal Communication and Writing

The point about “it’s deceptive to fake it” doesn’t just apply to dating apps or intimate situations. Personal communication in general is an area where how we express ourselves matters, both for us and for those who speak or write with us.

When we let a model transform our words and phrases, we’re breaking communication expectations. The words we choose and how we formulate our sentences carry much meaning. Direct communication will suffer if we let language models contaminate this type of interaction.

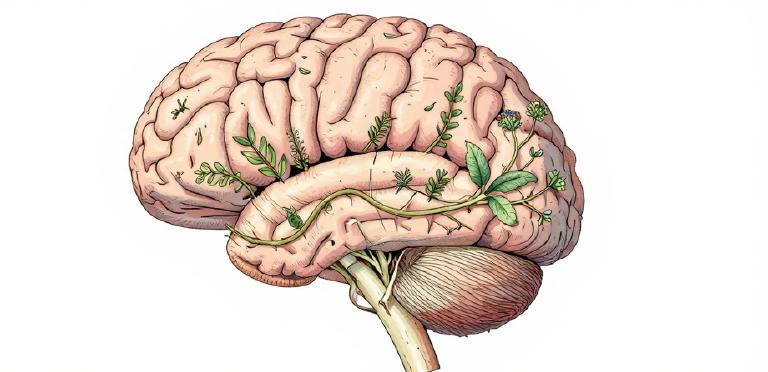

The Error of the “Extended Mind”

Another point I want to discuss is the idea of the “extended mind”:

“Much of our cognition isn’t limited to our skull and brain, it also happens in our physical environment, so much of what we define as our minds could be said to exist in the physical objects around us.”

“It seems quite arbitrary whether it happens in your brain’s neurons or in your phone’s circuits.”

This assertion is simply absurd. The fact that something happens in your brain rather than in a computer makes all the difference in the world. Humans are more than information processors.

What Can We Do?

I’m not saying that nothing should be automated by LLMs. But I think many are underestimating what we lose when we delegate.

Some Principles I Try to Follow:

- Use AI as a tool, not a replacement - AI helps me, but doesn’t think for me

- Keep critical thinking active - Question AI’s responses

- Don’t delegate tasks that build tacit knowledge - Those “boring” tasks often teach us the most

- Be transparent about AI use - When I use AI on something, I say so

- Practice without AI regularly - Keep skills sharp

Critical Thinking: The Key Skill of 2026

What’s curious is that, according to multiple sources, critical thinking will be the differentiating skill in 2026. The most valued skills:

- Critical thinking with AI

- Collaboration with AI tools

- AI literacy

- Prompt engineering

- Data awareness

Conclusion

We have a major challenge ahead to figure out what chatbots are adequate for in the long term. Personal communication may change forever, educational systems will need radical adaptations, and we need to reflect more carefully on what experiences in life really matter.

At the end of the day, the question is not “can I use AI for this?” but “should I use AI for this?”. And that’s a question only you can answer.

Comments